- The validity of Moore’s Law has come to an end as worldwide foundries struggle to keep up with the two-year term

- GPUs — once limited to graphically-demanding applications — are now being utilized for their computing accelerator power

- High-performance computing (HPC) seems to be the new standard in managing today’s vast amounts of data

We’re combining the leaders of AI computing and high-speed networking and data processing into one company and, so, this is really quite extraordinary.

With these words, Nvidia’s CEO, Jensen Huang, commented on the acquisition of Mellanox at a TV appearance on CNBC’s Mad Money. The deal had been announced more than one year earlier, on March 11, 2019, but it had to go through a lengthy process before being finalized. The last step involved the approval by China’s State Administration for Market Regulation (the China antitrust authority), which followed antitrust approval from the European Commission and Mexico as well as a waiting period provided by the US antitrust regulations.

The background: Nvidia’s billion-dollar shift

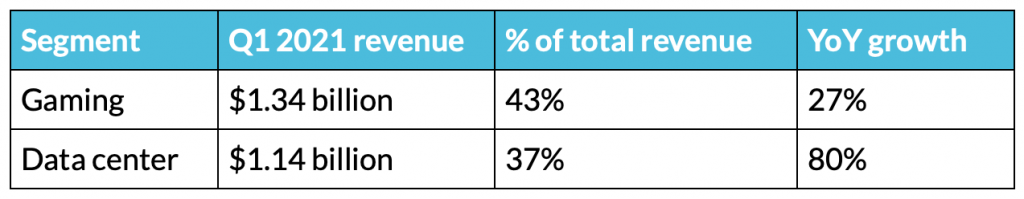

The $7-billion acquisition marks an important milestone for Nvidia, which has evolved into something more than the manufacturer of video cards for gaming that the mainstream audience remembers. By looking at the financial results for the first quarter of the fiscal year 2021, it is clear that the company has put considerable effort into the data center segment, which in terms of revenue almost equals that of gaming. What is maybe more interesting is the staggering 80% growth compared to the previous year.

This shift in the company’s strategy is a clear sign that something has changed in the computing industry. For decades, the latter has relied on the development of microprocessors: faster chips have allowed the introduction of devices that have kept up with the increasing amount of information produced every day and with our changing lifestyles. All of this was envisioned in the famous article Cramming More Components onto Integrated Circuits, published in 1965 by Gordon Moore in Electronics magazine:

Integrated circuits will lead to such wonders as home computers — or at least terminals connected to a central computer — automatic controls for automobiles, and personal portable communications equipment. The electronic wristwatch needs only a display to be feasible today.

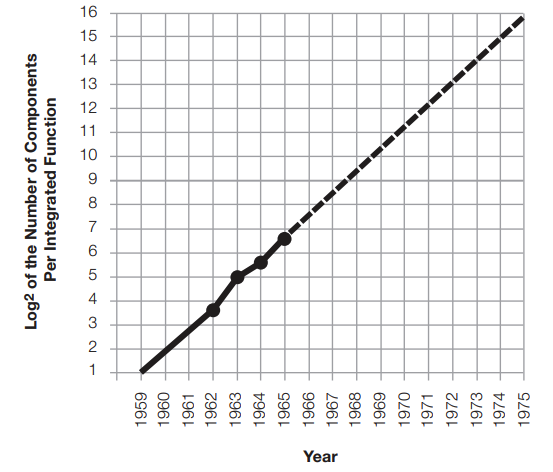

In the same piece, Moore also observed how the number of transistors that could be integrated into a single chip had been doubling every year and predicted that this trend would continue for at least a decade.

And that is precisely what happened. What is more, the exponential growth of microprocessors has been a fact well beyond that decade (even though Moore adjusted the term within which the number of transistors doubles to two years in 1975) — but this may be changing.

The end of Moore’s law?

In recent years, concerns about the chip industry’s ability to keep up with Moore’s law have arisen. According to some sources, the decline started as soon as a decade ago. Others identify Global Foundries stepping out of the 7 nm race a couple of years ago as the beginning of the end. The reasons behind these speculations relate to both technical and economic limitations.

Technical limitations

One of the main issues with shrinking microprocessors is electrical leakage. To put it simply, the channels that carry the electrical signal from one transistor to another have gotten so narrow that containing the current within them has been physically difficult (especially after the introduction of 10 nm units between 2016 and 2017).

Leaking electricity causes heat-related issues in transistors. In modern microprocessors, the number of transistors has reached unprecedented records — Intel’s Coffee Lake HEDT series packs 8.33 billion components — subsequent overheating has become a serious concern. To tackle this problem, CPU manufacturers have introduced throttling processes to slow down the chip’s speed according to the heat generated.

Economic limitations

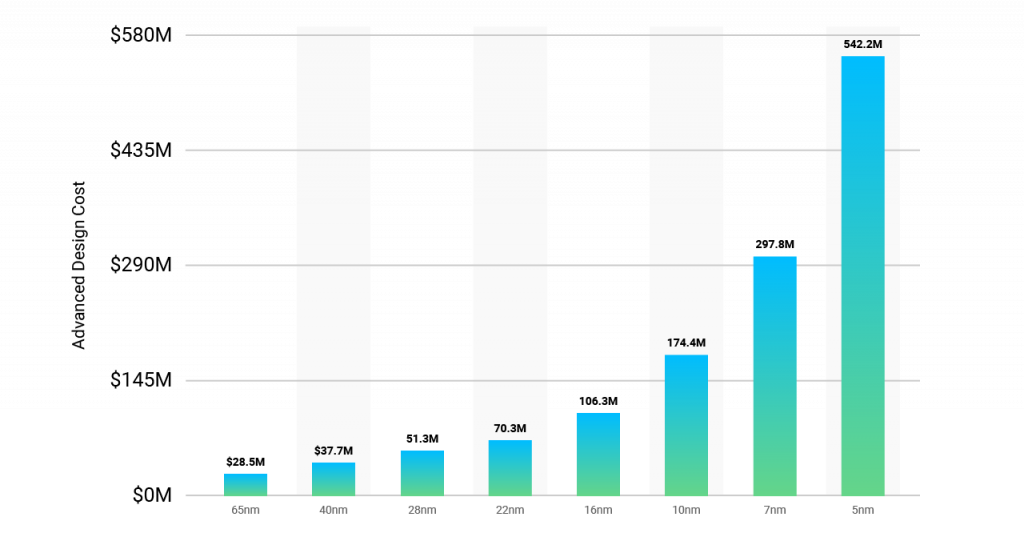

Cramming more and more transistors into a single device at each generation requires fabs — the semiconductor fabrication plants — to invest tremendous amounts of resources in upgrades and new equipment. As a result, the cost of these facilities has boomed from a few million dollars in the first years of the industry to the several billion dollars of today. Intel and TSMC seem to be pushing the edge further with an $11 billion plant in Israel and a $19.6 billion plant in Taiwan, respectively.

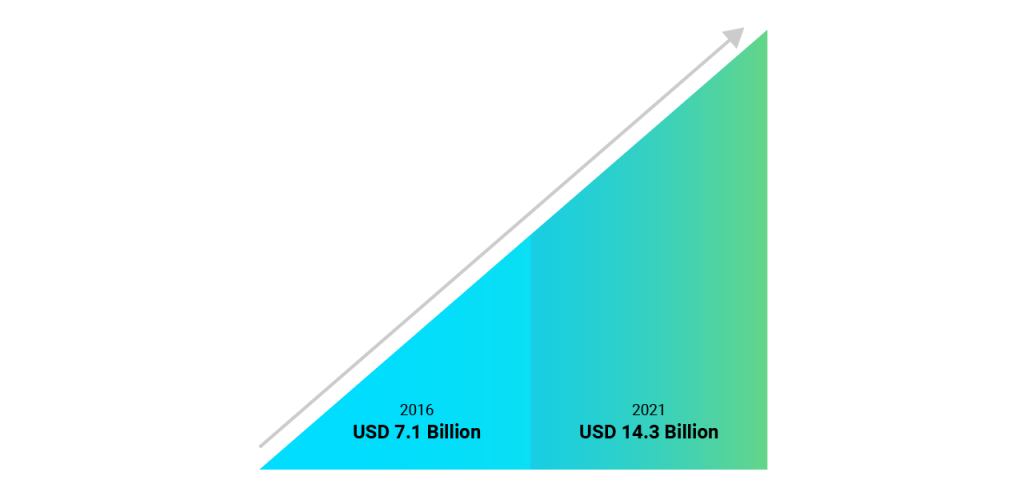

The businesses relying on these chips have seen their costs arising as well. The overheating issues mentioned above forced them to invest in increasingly complex cooling systems, especially those utilizing large server rooms where the most advanced microprocessors are used. Various market research confirms this tendency. In particular, one study reported that the data center cooling market is expected to grow at a CAGR of 14.9% between 2016 and 2021: a clear sign that more and more businesses are relying on these systems.

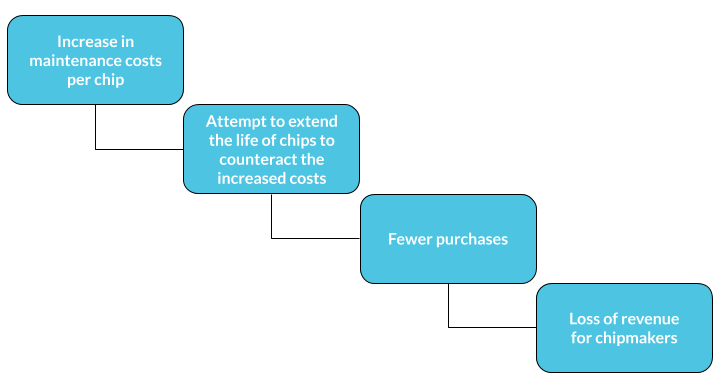

To pay off these new costs, the companies are now trying all they can to extend the life of their components, which translates into a loss of profits for chipmakers. A shrunken revenue stream means that CPU manufacturers have less capital to invest in R&D, which is the stage at which the technical limitations mentioned above are dealt with.

Multi-core processors and GPUs: a step forward

Even though it has become problematic to increase the number of transistors in a single component, chipmakers have found other ways to increase the computational capabilities of microprocessors.

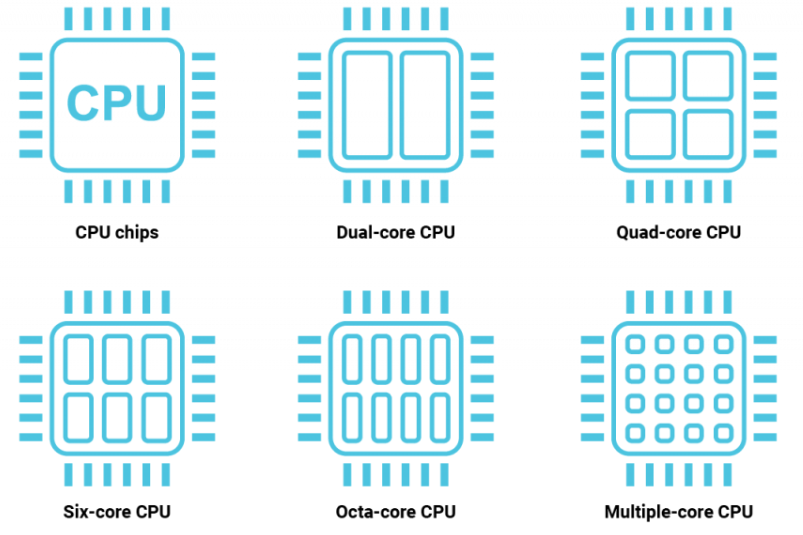

In the early 2000s, Intel and AMD launched their first multi-core processors. Instead of featuring a higher density of transistors inside a single chip, these components group together multiple chips: by doing so, the individual processors — or cores — can execute multiple instructions in parallel, increasing the overall performance. Over time, the number of cores has increased to reach the current standard of eight.

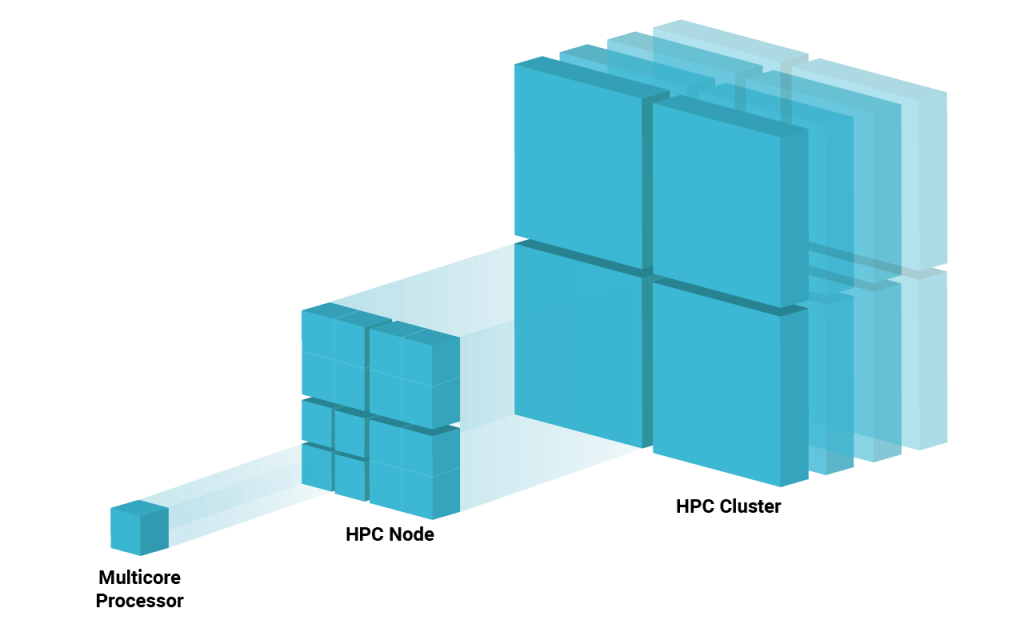

The concept of parallel computing has been pushed to an extreme by high-performance computing (HPC), in which multiple machines or nodes are clustered together to form a network. Arranged in this way, the system is capable of solving problems that none of the individual computers would have been able to tackle individually.

Another solution to the limitations of Moore’s law is the use of GPUs, which were originally designed to perform a vast number of parallel calculations to support the high-demanding graphics of video games. Today, the GPU’s parallel computing capabilities are adapted to other use cases — mainly machine learning and data analysis — in which they are used in conjunction with CPUs to accelerate the most demanding of tasks.

Nvidia’s shift toward data centers makes much more sense now. With decades-long expertise in GPUs — the term became popular in 1999 when the GeForce 256 video card was marketed as “the world’s first GPU” — the company saw an opportunity in the new market and decided to devote part of its GPU manufacturing capabilities to HPC customers.

The new frontier of computing lies in the combination of these two “workarounds”: high-performing GPUs operating within large HPC clusters. With the speed issues taken care of, what is important in these configurations is how the single node is connected to the others. Slow or unreliable connections, in fact, would counteract any improvements in speed and efficiency.

And this is exactly how Mellanox appeared on Nvidia’s radar. To discover why let’s take a look at the brief company profiles below.

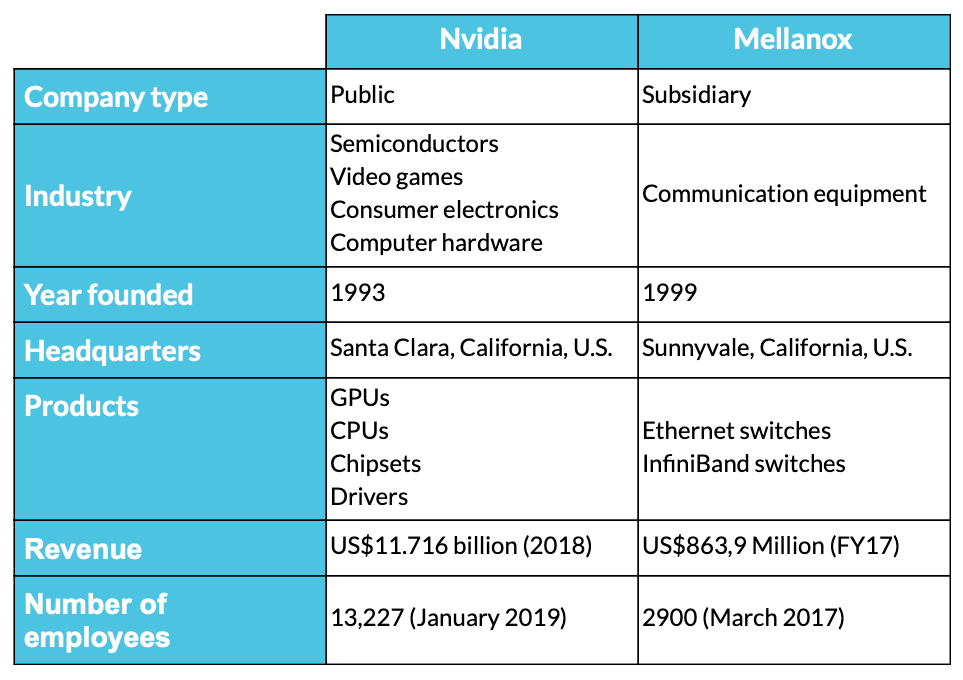

Nvidia is a Santa Clara-based American tech firm. Incorporated in Delaware, Nvidia is one of the world’s leading creators of graphics processing units (GPUs) that aim to improve the experience of computing for the gaming industry as well as various professional markets. Nvidia is also regarded as a key designer of system-on-a-chip units (SoCs) for the mobile computing and automotive markets. Since 2014, Nvidia has expanded its core business, focusing on five main markets:

- Gaming

- Professional visualization

- Data centers

- In-car entertainment

- Artificial intelligence

As well as the production of GPUs, Nvidia is also the provider of parallel processing capabilities to those in the fields of research and science to run high-performance applications more efficiently. They are stationed in supercomputing sites globally. In recent times, Nvidia involved itself in the mobile computing market, manufacturing Tegra mobile processors for tablets and smartphones, vehicle navigation, and in-car entertainment systems.

Mellanox is an Israeli-American multinational provider of computer networking goods based on the technologies of InfiniBand and Ethernet. Mellanox was founded in 1999 by former Intel and Galileo Technology executives. Mellanox started by manufacturing integrated circuits, and by 2009, it developed into a producer of complete end-to-end systems.

Mellanox’s key areas of focus include software, adapters, switches, silicon, and cables. The markets Mellanox supply to are typically high-performance computing, cloud computing, data centers, and computer data storage.

California-based multinational computer technology corporation Oracle became a significant investor in Mellanox in 2010, holding approximately 10% of its stock. Mellanox then went on to acquire the data center switch provider Voltaire Ltd. in February 2011 in a $218 million deal.

By 2016, Mellanox had revenues of $857 million, and in December 2017, Mellanox announced that it would launch a new startup accelerator. Mellanox shares rose by 55 percent in 2017, and it made its most substantial acquisition with EZchip in the same year.

It is clear how Mellanox’s expertise in high-speed networking hardware — especially InfiniBand switches — is exactly what Nvidia needed at that moment of transition: the acquisition can be seen as a way of bringing important know-how in-house. But did it really?

The Mellanox acquisition from a patent perspective

The best way to confirm whether the acquisition was beneficial for Nvidia beyond the financial aspect is to look at the companies’ patent portfolios. To do so, we used Patent Search and Due Diligence by Patentcloud to identify and evaluate the patent assets of the two companies.

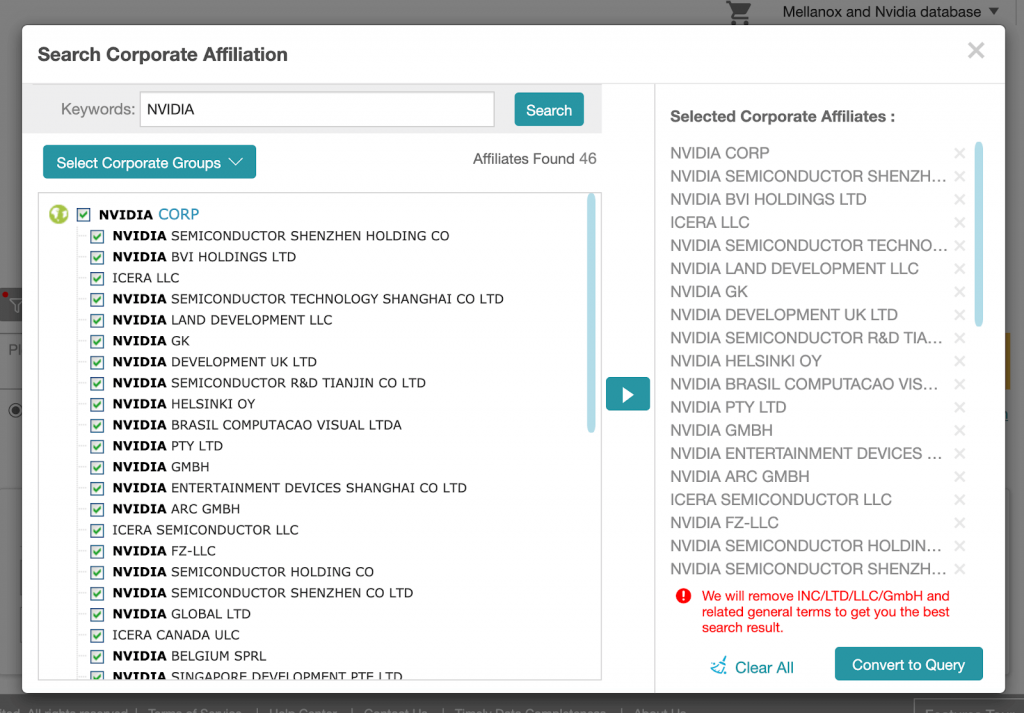

In particular, we relied on the Search Corporate Affiliation function in Patent Search to identify affiliates and subsidiaries of each of the two companies, making sure to detect all the patents held by the two groups.

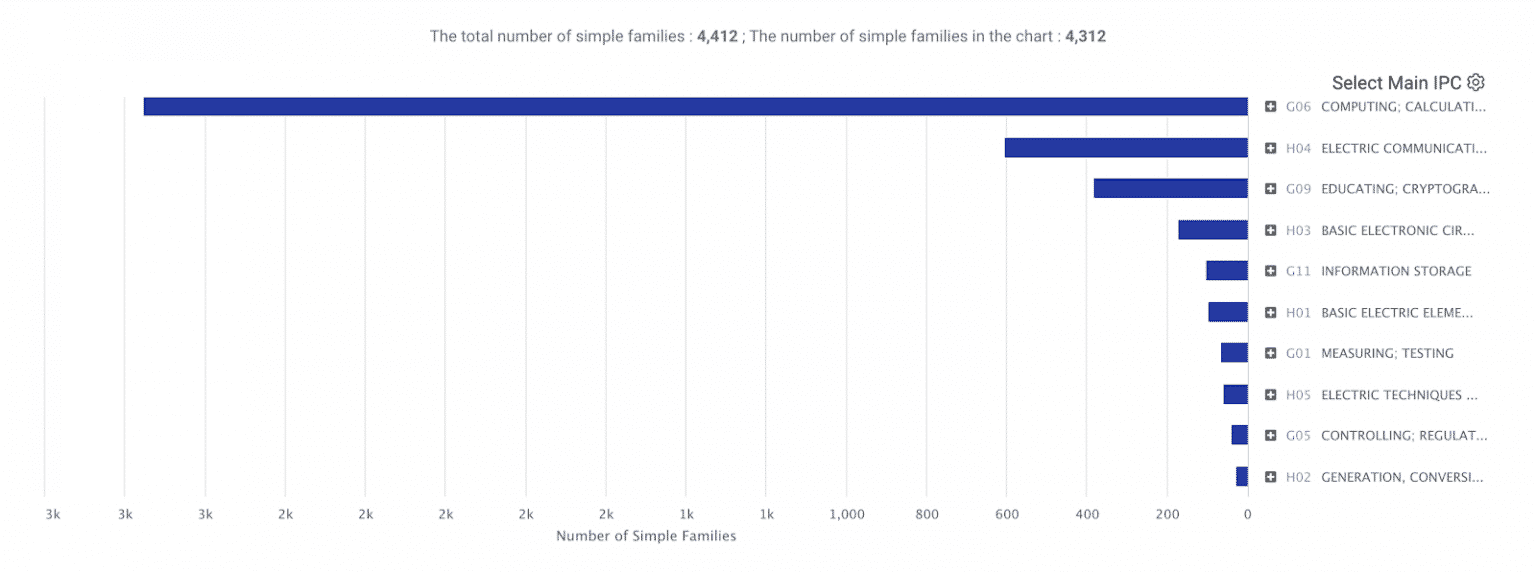

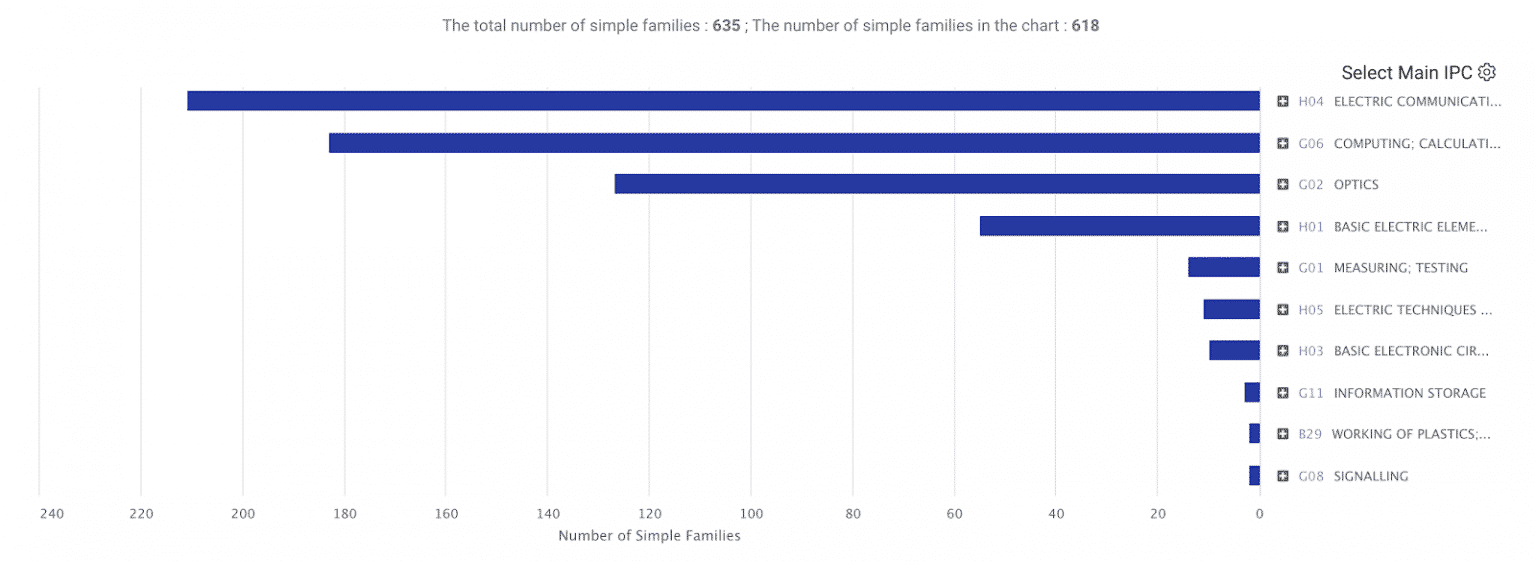

For the purpose of this article, we will focus on the Technical Fields dashboard. As we can see from the image below, most of Nvidia’s patents (2,756 patent families) are filed with the main IPC class* being G06 — COMPUTING; CALCULATING; COUNTING.

If we take a look at WIPO’s portal containing the details of each class, we find that class G06 covers:

- Simulators which are concerned with the mathematics of computing the existing or anticipated conditions within the real device or system;

- Simulators which demonstrate, by means involving computing, the function of apparatus or of a system, if no provision exists elsewhere;

- Image data processing or generation.

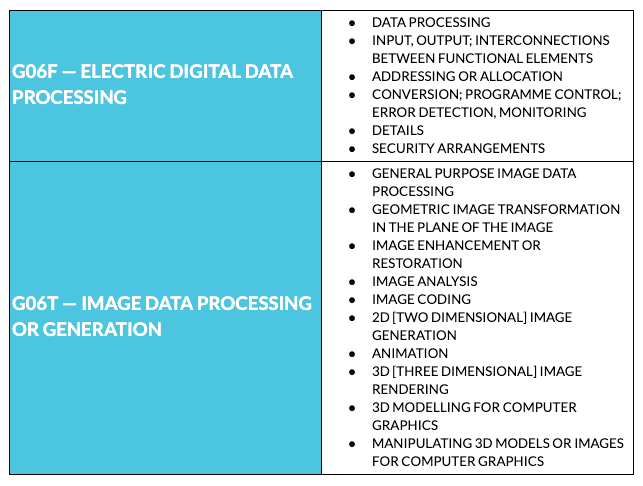

By expanding the chart further, we notice that the leading subclasses are G06F — ELECTRIC DIGITAL DATA PROCESSING (1,748 patent families) and G06T — IMAGE DATA PROCESSING OR GENERATION (817 patent families), which cover the following:

All of these findings are in line with what one would expect from a company like Nvidia, which was born as a graphic video card manufacturer before moving into the data center segment. Now, let’s take a look at the Mellanox portfolio’s technology distribution.

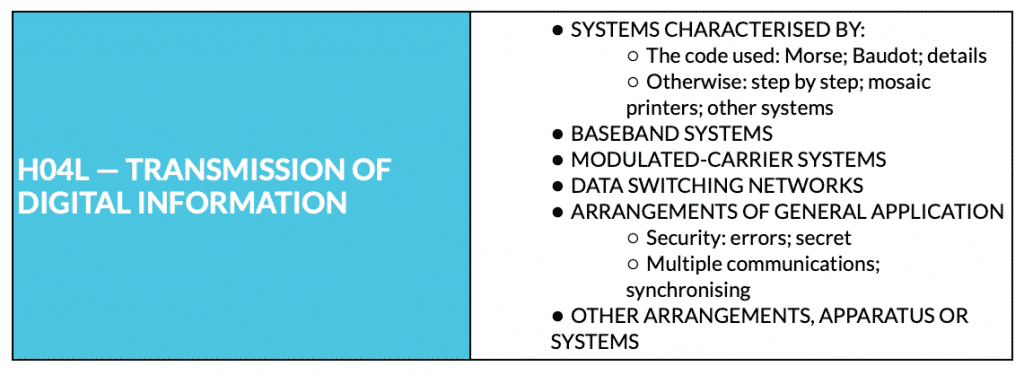

Occupying the top spot, with 211 patent families, we find class H04 — ELECTRIC COMMUNICATION TECHNIQUE, which relates to the “transmission of information-carrying signals” by means of radio or optical communication. The major subclass under it, with 173 patent families, is H04L — TRANSMISSION OF DIGITAL INFORMATION, which covers the following:

In second position, with 183 patent families, we notice class G06F — ELECTRIC DIGITAL DATA PROCESSING (Nvidia’s first), which probably derives from:

- The company’s early years as an integrated circuits manufacturer;

- The experience matured after 2009’s shift toward end-to-end systems.

Overall, the chart confirms Mellanox’s focus on developing networking hardware and HPC solutions, offering an explanation of why Nvidia decided to acquire the Israeli-American company. This is even more clear when comparing the different weights that class H04 has in the two portfolios: a little more than 13% in Nvidia’s one against over 33% in the case of Mellanox.

It is worth mentioning that the number of patents included in that 13% is nevertheless relevant considering the overall size of Nvidia’s portfolio. According to our experts, this might indicate that the company attempted at developing the networking hardware on its own, filing a large number of patents (which is usually not an issue for big name companies with high financial capabilities). However, the technology developed in-house possibly did not yield the desired results, prompting Nvidia to look for a more focussed company: Mellanox.

It’s not all about numbers

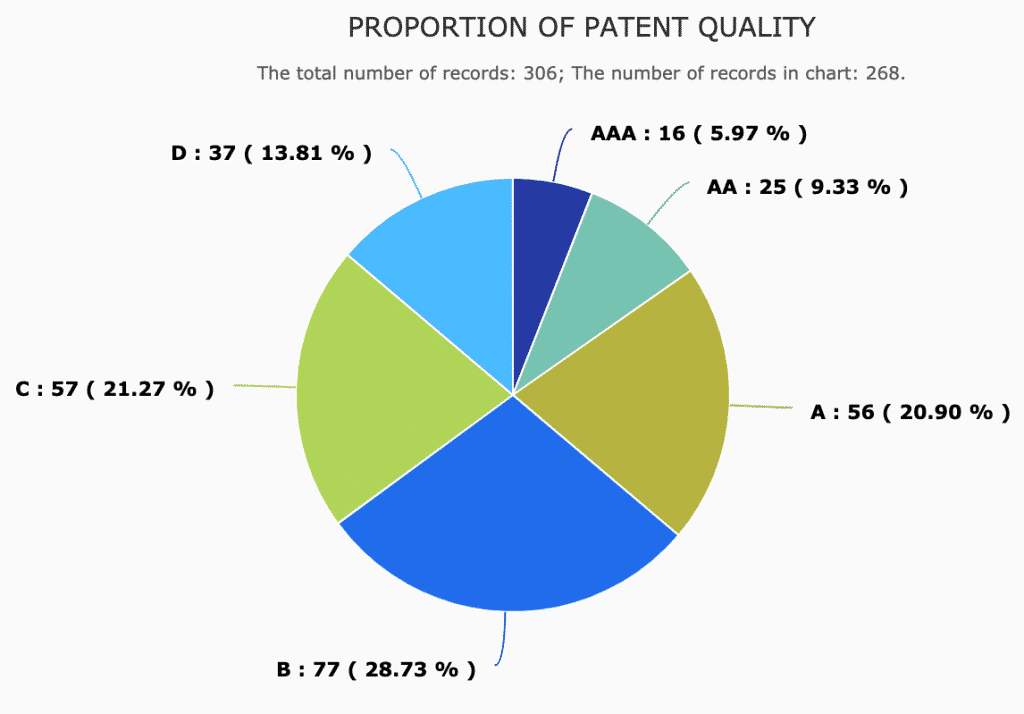

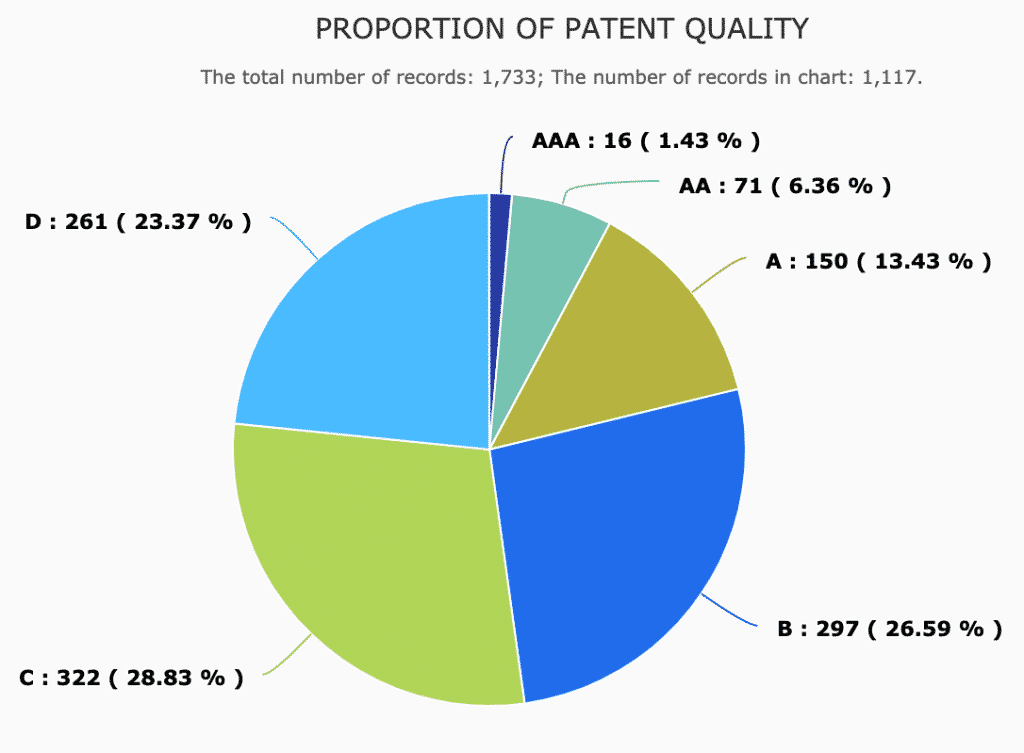

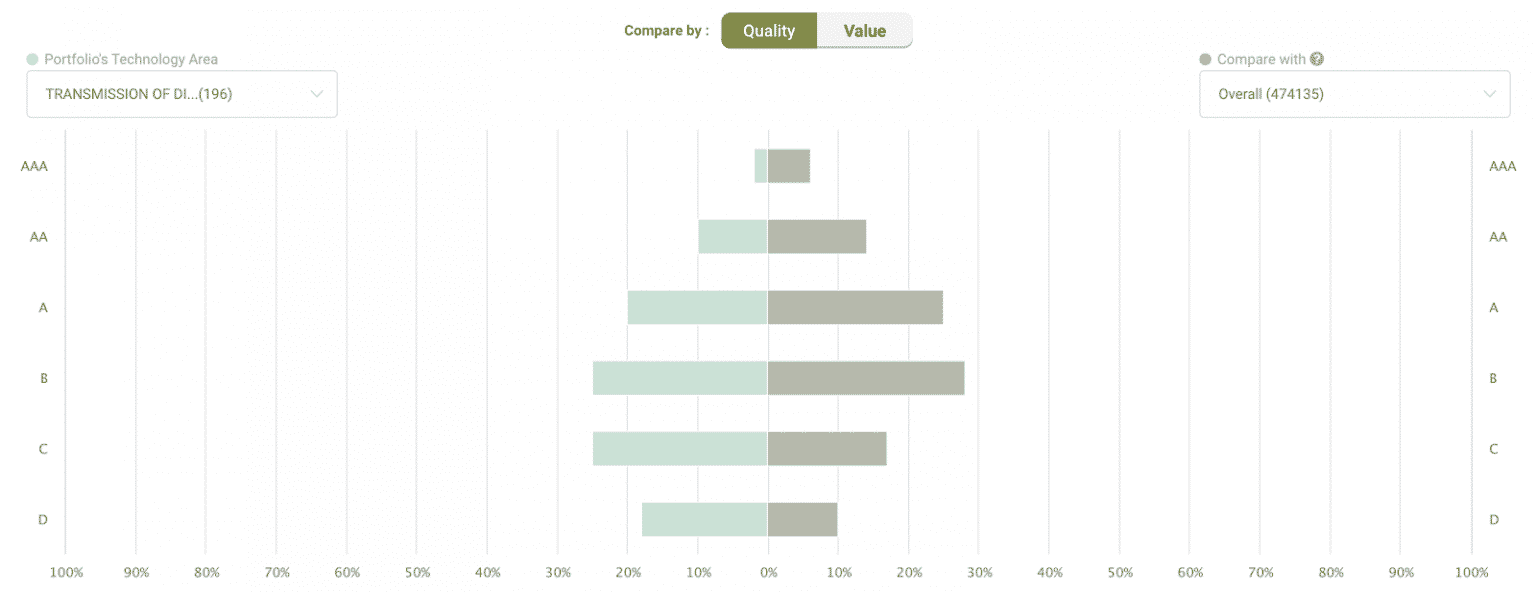

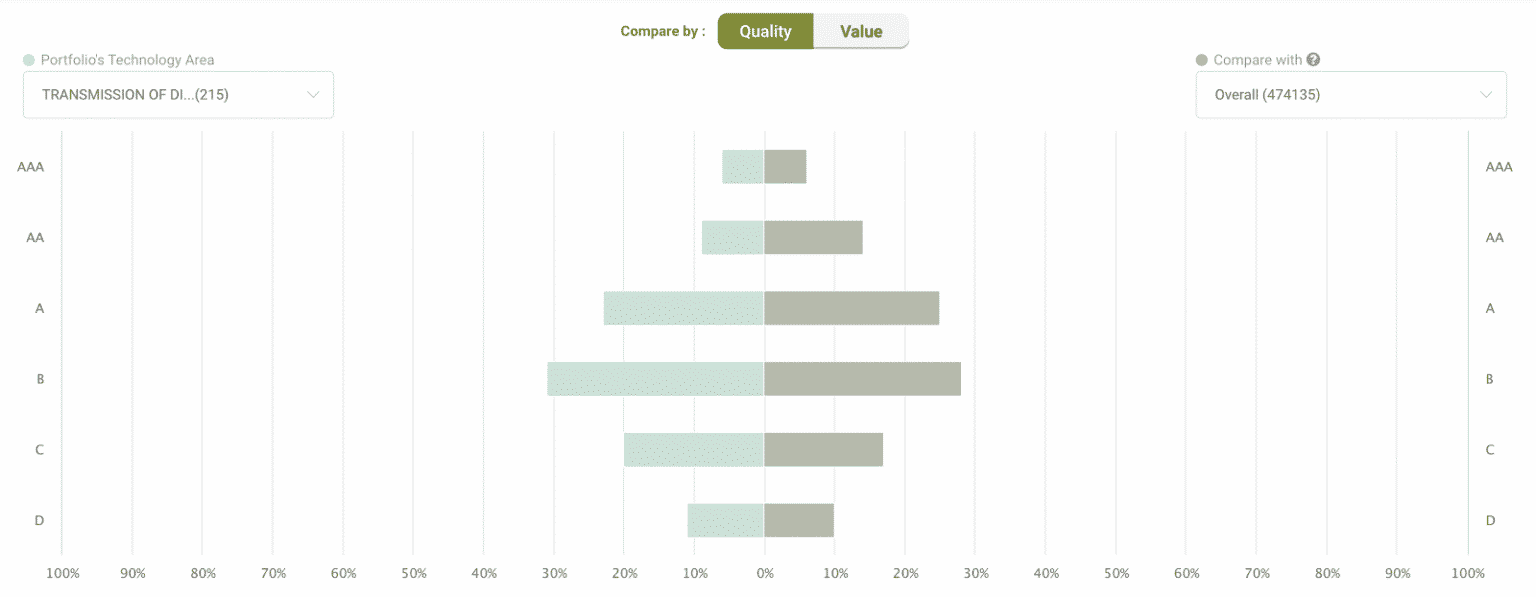

As we stressed several times before, quality is much more important than quantity when it comes to patents. Using the Quality Ranking measure developed by InQuartik, we grouped patents into six levels: AAA, AA, A, B, C, and D. High-quality patents are defined as patents whose quality score is in the 25 percentile or above, and they are rated A, AA, or AAA. (For more information, see our whitepaper below.)

We took a look at the share of high-quality patents among those in class H04:

The proportion of patents ranked AAA, AA, or A for Nvidia is 21.22% while for Mellanox it is 36.2%. We then observed how these patents perform against the industry average, finding that Mellanox outperforms Nvidia with a higher proportion of AAA and A-ranked assets and a lower proportion of C and D-ranked assets compared to industry peers.

Closing thoughts

It should be clear by now that Nvidia’s acquisition of Mellanox is a big deal not only for the companies involved — which will surely enjoy higher revenues in the near future — but most importantly for the computing industry overall. With the end of Moore’s law on one side and an increasing load of data to process on the other, the sector’s stakeholders had to come up with new solutions to back up today’s tech revolution. What Nvidia and Mellanox are doing has the potential to be exactly what is needed, and it might even become a successful model for the other players.

In these months, many have asked: was the deal worth the $7 billion in cash that Nvidia paid? With the future of computing at stake, we can confidently say that it was — and patents seem to back us up.

Want to access the patent portfolio of a company, inventor, or agency? Check out

free trial with no credit card required.

free trial with no credit card required.